Photo Location Accuracy: 7 Clues That Matter

Why photo location accuracy changes from one image to another

Two photos can seem alike but produce very different location results. An original phone image with GPS tags, clear signage, and a wide street view gives a tool much more to work with than a cropped screenshot from social media.

That difference matters before upload and after the result appears. A strong file can support map placement, coordinates, camera details, and a useful explanation inside a photo location workflow. A weak file may still produce a best match, but it should be read with more caution.

The goal is not to guess whether AI is "good" or "bad" in general. The goal is to judge how much evidence your specific photo carries, then read the result like a clue-based investigation rather than a promise of perfect certainty.

What metadata can tell you before visual analysis starts

GPS tags, timestamps, and camera details that support location clues

Metadata gives the fastest starting evidence. The Library of Congress Exif format guide says the Exif family stores structured metadata inside common image files. That metadata can include camera settings, time details, geographic data, and thumbnails. The same guide says GPS support arrived in Exif 2.0 and was improved in 2.2. That helps explain why an original file may already contain machine-readable location fields before visual analysis starts.

When those fields are present, the site can begin from stronger evidence. GPS tags can narrow the search area, timestamps can support context, and camera details can help confirm whether the file is an original capture or a later export. That does not make the result automatic, but it does shorten the path from image upload to a credible location match.

What changes when the photo is exported, edited, or shared

Exported or shared copies often carry less evidence than the original file. Apple says location coordinates can be embedded in photos when camera location access is enabled. Its desktop Photos app also includes an "Include location information" option during sharing and export. In practice, that means one version of an image may still hold location fields while another version of the same shot may not.

This is why edited gallery exports, messaging-app copies, and screenshots deserve a different expectation level. If location tags are missing, the system has to lean harder on visible clues such as signs, building styles, terrain, and road markings. The result can still be useful, but the confidence should come from the whole evidence stack, not from one surviving detail.

Seven photo clues that improve location detection

Distinctive landmarks, signs, and readable text

Clue 1 is uniqueness. A famous bridge, a station entrance, a mountain ridge, or a hotel facade gives the model something specific to compare. Generic walls, empty rooms, and tight close-ups do not.

Clue 2 is readable local information. Street signs, shop names, transit symbols, license formats, and even language on posters can quickly narrow the region. A photo that shows both a landmark and local text is usually much easier to place than one that only shows a face or a small object.

Road design, architecture, and terrain patterns

Clue 3 is built environment. Lane markings, traffic sign shapes, utility poles, roof styles, and sidewalk design often point to a country, city, or neighborhood pattern. These details are small, but together they create a strong geographic fingerprint.

Clue 4 is natural setting. Coastlines, desert colors, tree types, snow lines, and mountain shapes help rule places in or out. The best location results usually come from images that show both man-made and natural context instead of one flat background.

Resolution, framing, and visible detail

Clue 5 is image quality. A sharp original file preserves more edges, text, and small objects than a compressed repost. When the frame is soft, heavily filtered, or saved at low resolution, the system loses many of the signals it needs to compare place-specific details.

Clue 6 is scene coverage. Wide framing helps because it shows more relationships between objects, roads, skyline lines, and terrain. A narrow crop may keep one interesting object but remove the clues that explain where that object sits in the real world.

When multiple clues raise confidence

Clue 7 is agreement across evidence types. The strongest results appear when metadata, visible landmarks, text, road patterns, and terrain all point in the same direction. That is much more reliable than a result built from one isolated clue.

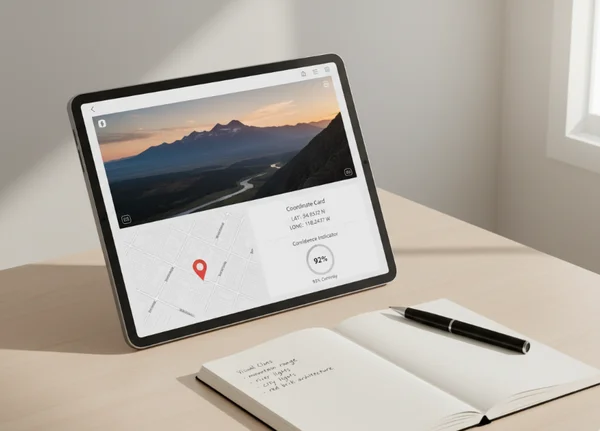

This is also where the site's explanation layer matters. A good result is not only a place name. It is a place name supported by coordinates, map context, camera details, and a reasoned trail that the reader can inspect inside the interactive map results.

How to judge three common photo scenarios

Original phone photo with intact metadata

This is the best-case starting point. Original files often keep the richest metadata, and they usually preserve resolution as well. If the scene also contains visible landmarks or text, the upload has both machine-readable evidence and visual evidence working together.

Edited export with partial clues left

This middle case is common for photographers and content creators. The image may still look sharp, but some metadata may be reduced, rewritten, or removed during export. In that situation, recognizable buildings, road patterns, and terrain become more important because they have to carry more of the location argument.

Screenshot or social media copy with no GPS data

This is the hardest case, but it is not always hopeless. A screenshot may still show a skyline, a distinctive storefront, a mountain silhouette, or local language that gives the system something real to compare. The key is to expect a broader search and to read the final match as a clue-driven estimate rather than a fixed answer from a lost original file.

If you work with these weaker files often, a visual clue analysis tool is more helpful than a simple reverse lookup. It can combine the remaining scene details with any metadata that survived.

What a confidence score should and should not mean

Strong evidence versus best-match guesses

A confidence score should reflect evidence quality, not magic certainty. GPS.gov accuracy guidance says GPS-enabled smartphones are typically accurate within 4.9 meters, or 16 feet, under open sky. Buildings, bridges, and trees can make that accuracy worse.

That fact changes how a location result should be read. A high score is strongest when the image also contains visual cues that fit the same place. A lower score does not always mean the system failed. It may simply mean the photo lacks enough distinct evidence for a tight match.

Why context matters more than a single number

A number becomes more trustworthy when it comes with context. Look at the map, the coordinates, the visible landmarks, the camera details, and the explanation of why that location outranked other options. That full package is what helps a reader decide whether the result is ready to use for memory organization, travel research, or archive work.

This also protects against overconfidence. The platform is designed to explain and narrow possibilities, not to act like a forensic certification service. Treat the confidence score as one part of a confidence-based analysis report, not as a replacement for your own review of the clues.

What to do next before you upload a photo

Before the next upload, use a short checklist. Keep the original file when possible. Prefer images with wide scene context, readable local details, and visible landmarks. Be careful with screenshots, cropped reposts, and heavily edited exports because they often carry less usable evidence.

After the result appears, compare the confidence score with the rest of the evidence. Check whether the map, coordinates, visible scene, and explanation reinforce one another. When those pieces line up, the result is much easier to trust and reuse in future photo organization.

The most reliable habit is simple: upload the best available version of the image, then read the output as evidence, not hype. That approach makes an AI photo location tool more useful, more transparent, and more realistic for everyday photo investigation.